Now to the meat of the problem, how the tasks actually run. Some of these tasks are going to run in parallel and that could cause threading issues. Looking at the original challenge there is an obvious sticking point. The two tasks ‘Preheat heater’ and ‘Mix reagent’ need to notify ‘Heat Sample’ they are done in a thread safe way so I will need a mutex. Alternatively I could use a thread safe boost::signal to inform the node that a task has finished, but that’s a bit heavyweight.

Another way though is if the computer is maintaining the state of each node, and the DAG is guaranteed not to change, then each node just needs to know how many inputs it has and keep a counter tracking how many have fulfilled their completion. std::atomic is designed for cases like this.

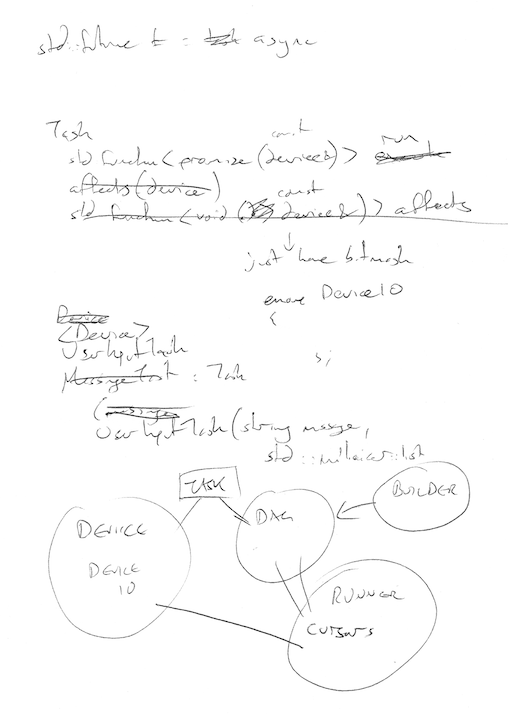

Because tasks can run in parallel, there may be more than one ‘cursor’ at the point of execution, so the computer has to store a vector of cursors. Ideally the code will not continually start and stop threads to avoid a drain on resources, so future version will use a thread pool.

I can’t believe I’m posting these scrappy doodles but I’m chucking these pages out fast so I can crack on with code. This is this morning whilst waiting at the GP!

Despite my expirations in optimising the DAG, I’m going to start with a simple version using std::function calls. I will fire off the threads using async which means I can take advantage of futures.

Futures will be particularly useful for updating my simulated hardware device with the output from the task. I know what inputs and outputs I am affecting in each task, so the future can return a block of ‘things to update’ on the hardware when the task has completed. Given my tasks specify what their inputs and outputs are I can validate that parallel threads are not going to access the same inputs and outputs and avoid collisions when the data is written. Due to the validation system, those collisions can be reported at builder time so you are guaranteed a clear flow.

For now I think the validation may be done through a function per tasks called ‘affects’ which returns which parts of the DeviceIO will be affected. For now this could just be a bit mask of input/output/cell IDs in an enum that are available on the device.

Whilst I’m here here’s last night’s godbolt doodle. I discovered that std::function does a lot more than a simple lambda. The compiler knows how to optimise lambdas. In this example I do everything with native function pointers for a ridiculously tight system https://godbolt.org/g/RTdnU1. This is more of a vanity project than something I will use for now because it would put significant constraints on the design before I’ve had a chance to shape it. Still it’s good to see what sort of methods I could use to optimise in the long run.